When AI Detector calls

To AI or not to AI?

Greetings friends,

First, all the photographs here were taken without the assistance of AI. I just wanted to throw it out there from the outset.

________________________________________________________________________

I recently read Joshua Rothman’s article “Is It Wrong to Write a Book with A.I.?,” in the latest issue of The New Yorker, and I found it more nuanced and useful than the usual alarmist hand-wringing or some techno-utopian crap. His basic point is that A.I. may end up in the arts the way the drum machine did in music. First, dismissed as fake, mechanical, cheating, soulless, then gradually absorbed into the creative process in inventive and personal ways. The tool, Rothman argues, is not the whole story. What matters is how it is used. I’ve heard that a lot when I was younger.

Rothman brings up the recent Shy Girl fiasco, which fascinated me. The novel was accused by some very discerning, conscientious readers of being at least partly A.I.-generated because of the telltale signs, such as too many adjectives, too many metaphors, a strangely uniform rhythm, prose that felt synthesized.

Pangram, an A.I.-detection company, reportedly judged that horror novel to be seventy-eight percent A.I.-generated. The author blamed a freelance editor, said the manuscript may have been run through A.I. without her consent, etc. Excuses, excuses. The publisher eventually pulled the book.

But that caught my attention because I’ve subscribed to Pangram myself. I pay twenty dollars a month for Pangram because I want my students to know I’m not asleep at the wheel, that I’m not just another gray-bearded, bald geezer who howls at the wind and .... What?

No, I don’t ban A.I. outright. I’m not interested in moral panic, and I’m not pretending these tools don’t exist. I allow students to use A.I. to ask questions, to brainstorm, to test structure, to push on ideas, to help them think through a problem. But... I require them to submit the prompts, questions, and comments they gave ChatGPT (or whichever platform they’re using) as part of the assignment. If you used it, show me how you used it.

That seems fair to me.

Most are not always honest, of course. But I want them to know I’m paying attention.

Then I heard about Shy Girl, about how “too many adjectives” and “too many metaphors” were supposedly red flags. That gave me pause. I happen to love adjectives and metaphors. Maybe too much. Excess has always been one of my weaknesses as a writer, but at least it’s MY weakness. So out of curiosity I uploaded a paragraph from a short story I had been writing into Pangram.

It came back: 100 percent A.I.

Not maybe. Not mixed. One hundred percent!

I wrote every single effing word of it. I swear. Cross my heart and hope to die, stick a needle in my eye!

That was the moment the whole thing stopped feeling merely flawed and started throbbing absurd.

Because once Pangram declared my actual human writing fully synthetic, I found myself doing something grotesque — trying to humanize it for the machine. Destroying my own writing on purpose. Making it clunkier. Less fluid. Less like my own voice. In other words, I had to make it worse so it would read as more human.

That is fucked up!

You spend years trying to write better sentences. Sharper sentences. Sentences with rhythm, texture, pressure, surprise. You develop an ear, build a style, however flawed, however excessive. And then some goddamn algorithm tells you that your prose is TOO fluid, TOO metaphorical, TOO tonally consistent to have been written by a human. So what’s the lesson? Pull back? Flatten the rhythm? Sand down the language? Aim not to write better, but to write like an average bad writer?

We are now in the position of teaching ourselves to sound worse in order to seem more authentic to a machine.

At that point, frankly, I might as well quit writing and take up badminton.

A friend of mine from New Jersey (not the kind of person who sends me things at midnight with a subject line that just says “you need to read this”) forwarded me this LinkedIn article (at midnight, with the subject line “you need to read this”) by Michael Stover, a professional freelance writer, titled “The Great AI Detector Delusion: Why Using AI to Police Human Writers Is Hypocrisy at Its Finest.”

Stover’s central point is that AI detectors are fundamentally unreliable — producing false positives at significant rates and disproportionately flagging non-native English speakers, penalizing writers for having a distinctive style rather than for any actual AI use. But his sharpest observation is this: using an AI tool to render judgment on human writing is itself a form of hypocrisy. You are outsourcing a human, contextual act the very kind of machine you claim to be guarding against. The detector doesn’t read. It pattern-matches. And pattern-matching is not reading any more than a paint-by-numbers kit is painting.

Let’s compare A.I. to a real writing workshop.

In a workshop, you bring in pages. Your classmates respond to the choices you made. They ask why a character did this, why the scene turns there, why a line of dialogue feels false, why the ending arrives too late, why the central conflict remains muddy, etc. Sometimes they’re right. Sometimes they’re wrong. Sometimes they misunderstand the scene entirely. But even that misunderstanding is useful, because it tells you something about what’s actually on the page. Then you go back and rewrite.

A.I. can be useful in the same preliminary way. It can help a student brainstorm. It can generate questions. It can point out that a scene lacks conflict, that a character’s want is vague, that the midpoint comes too late, that the stakes are generic. It can offer possibilities.

But the actual writing, the words, the images, the rhythms, the dramatic choices, has to come from the writer.

Otherwise what are we writing and what are we teaching?

Rothman gets at something similar in his article when he notes that many forms of writing are already collaborative in ways we ignore. Journalism has editors. Television has writers’ rooms. James Patterson has what is essentially a novel factory. He often works by generating the concept, the premise, the detailed outline, then hands all that to his collaborators who draft the manuscript. Rothman notes that Patterson can supervise around thirty projects at once and publish about fifteen books a year.

Context changes what we mean by authorship.

I’m not against students using A.I. to think. I’m against students using it to avoid thinking.

I’m not against tools. But I’m certainly against the fantasy that Pangram, or any other detection tool, can settle these questions for us. It can’t. Stover puts it plainly: institutions and individuals who defer to flawed technology to make critical judgments about people’s honesty, craft, or intelligence are not solving a problem. They’re creating a new one. A student may sound artificial and a machine may sound certain. But the truth is messier than either one.

So yes, I still tell my students to show me their prompts if they use A.I. I still want transparency. I still think undeclared use is a form of dishonesty.

But I no longer believe that Pangram, or any other detector, is an oracle.

Sometimes the machine is right. Sometimes the machine is effing ridiculous.

And sometimes the only trustworthy test is the oldest one. Does this sound like a person making choices on the page, or like the page making the choices for them?

What do you think?

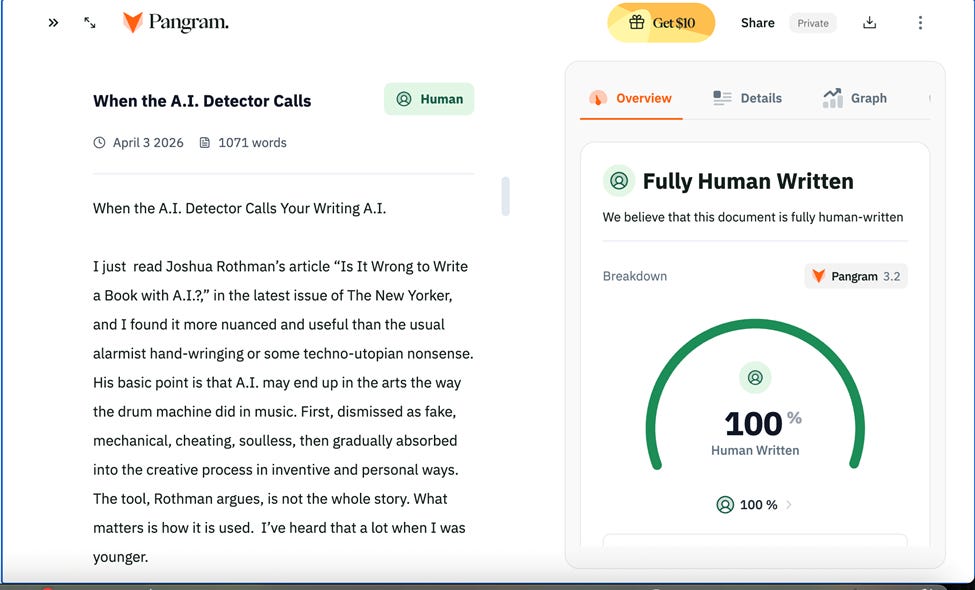

P.s. I couldn’t help myself and plugged the entire text of this post into Pangram. Phew!

___________________________________________________________________________

In Other Realm of Happenings

Law & Copyright

In March 2026, the U.S. Supreme Court declined to hear the case of Stephen Thaler, who claimed copyright for art made by his AI system “DABUS.” The ruling affirmed what lower courts had found: AI-generated work without a human creator cannot hold a copyright.

On January 22, 2026, the Human Artistry Campaign — backed by nearly 800 creators including Billy Corgan, Bonnie Raitt, and members of R.E.M. — launched a campaign called “Stealing Isn’t Innovation,” protesting the mass unauthorized harvesting of copyrighted works to train AI models.

🎵 Music

AI music startup Suno, after a $250 million Series C round and a $2.45 billion valuation, can reportedly generate a catalog equivalent to Spotify’s entire library every two weeks.

AI music tools like AIVA, Suno, Udio, and Google DeepMind’s MusicLM are now used daily by professional composers in film and TV sync licensing — no longer experimental, but production-ready.

Artist Holly Herndon has pioneered ethical AI vocal experimentation, building partnerships with machine learning systems that use consent-based data models — showing that AI and artist rights don’t have to be at odds.

🔍 AI Detection

The most accurate AI detectors in 2026 — GPTZero, Winston AI, and YouScan — claim 99%+ detection rates on pure AI-generated text, but all struggle significantly on passages under 50 words.

Here’s the catch: when AI text is run through “humanizing” tools before submission, detection rates collapse dramatically — Turnitin drops to 5.1% accuracy and Originality.ai to just 7.8%. The arms race is very real.

🎨 Visual Arts

Generative AI could automate up to 26% of tasks in the arts, design, entertainment, media, and sports sectors, according to recent research.

Artists like Stephanie Dinkins use AI to create platforms that invite viewers into dialogues about technology, race, gender, and aging — using the machine as a provocation tool rather than just a generator.

📊 Broader Trends

MIT Sloan analysts predict that 2026 will see a deflation of the AI bubble, with the focus shifting from AI as an individual tool to AI as an organizational resource — a significant cultural and institutional shift.

One striking paradox: generative AI is capable of producing new styles and combinations, yet critics warn it risks creating a self-referential loop — training on AI-generated data could eventually make the outputs increasingly derivative and creatively stagnant.

Thanks for reading and being a subscriber.

’Til next time.

ak

Really enjoyed the thinking in this piece. The territory has significantly shifted out there and we're all trying to make new maps of it. It's taken us years or even decades to form useful models about other technologies, so it seems foolish to believe what anyone (or any machine, these days) says with a tone of absolute certainty about AI--it's simply too early.

I'm a software engineer by trade and use AI everyday now. Sometimes I think I'm being paid for my skepticism--I am _always_ testing it for quality and accuracy. But I've found ways to get quite a lot of help out of it. It's just not magic: it's constant vigilance. Kind of like you can fall asleep on the power drill. You always have to drive it, and drive it true.

> I’m not against students using A.I. to think. I’m against students using it to avoid thinking.

For me, this was the gem here--it really clarified, at a high level, what "good" means when it comes to how AI is used.

The exact same thing happened to me!!! I too love metaphors. Use them all the time.... thanks for bringing this to light, Alex.